Why transaction intelligence and customer understanding remain two separate systems - and what it costs banks every day.

A gap that was designed in, not left by accident

Most banks can reconstruct any transaction in their history with near-perfect fidelity: the amount, the counterparty, the channel, the settlement path, the timestamp to the millisecond. That capability is real, hard-won, and genuinely impressive.

But ask a different question - why did this customer move money at this moment, and what does it signal about what they need next - and the confidence evaporates.

This is not a data volume problem - banks are drowning in data. It is an architecture problem, and it runs deep.

{{cta-1}}

Transaction systems were built for a specific purpose: to protect money, ensure settlement, and satisfy regulatory reporting. They were engineered for completeness and auditability, not for inference or context. Customer understanding was added later, in layers, across different teams, different platforms, and different definitions of what a ‘customer’ even is.

The result is two separate systems of record that rarely speak to each other in real time: one that knows everything about what happened, and one that struggles to explain why.

Transaction systems tell you what happened with precision. Growth requires understanding why it happened at that moment. These are not the same problem, and they have never shared the same architecture.

The structural fault lines CDOs know well

For data leaders in financial services, this gap manifests in a handful of recurring and deeply frustrating patterns.

Fragmented identity across systems

A customer in the core banking system, the CRM, the mobile app, and the contact centre platform is often four slightly different people. Name formatting, address variations, account linkage logic, and the absence of a persistent, canonical customer identifier mean that building a unified view requires constant reconciliation work, which is expensive, error-prone, and never quite finished.

Event data without behavioural context. A customer logging into the app, browsing mortgage calculators, and then calling the contact centre within a 48-hour window is demonstrating something. But if those three signals live in three separate systems with no shared session or intent layer, the pattern is invisible. Teams see isolated events. The customer’s actual intent goes unread.

Latency that kills opportunity windows

Let’s consider some high-value customer moments:

- A salary increase visible in account activity

- A large inbound transfer

- A sudden shift in spending patterns

These are time-sensitive signals. By the time these appear in a weekly MI report or a batch-processed segment refresh, the window has often closed. The customer has already decided or been approached by a competitor who moved faster.

Siloed customer views across teams

Wealth managers, retail relationship managers, lending teams, and operations each hold a different version of the same customer. None of these is wrong, exactly, but none is complete. Cross-sell and retention opportunities fall into the gaps between these views because no single team has the whole picture.

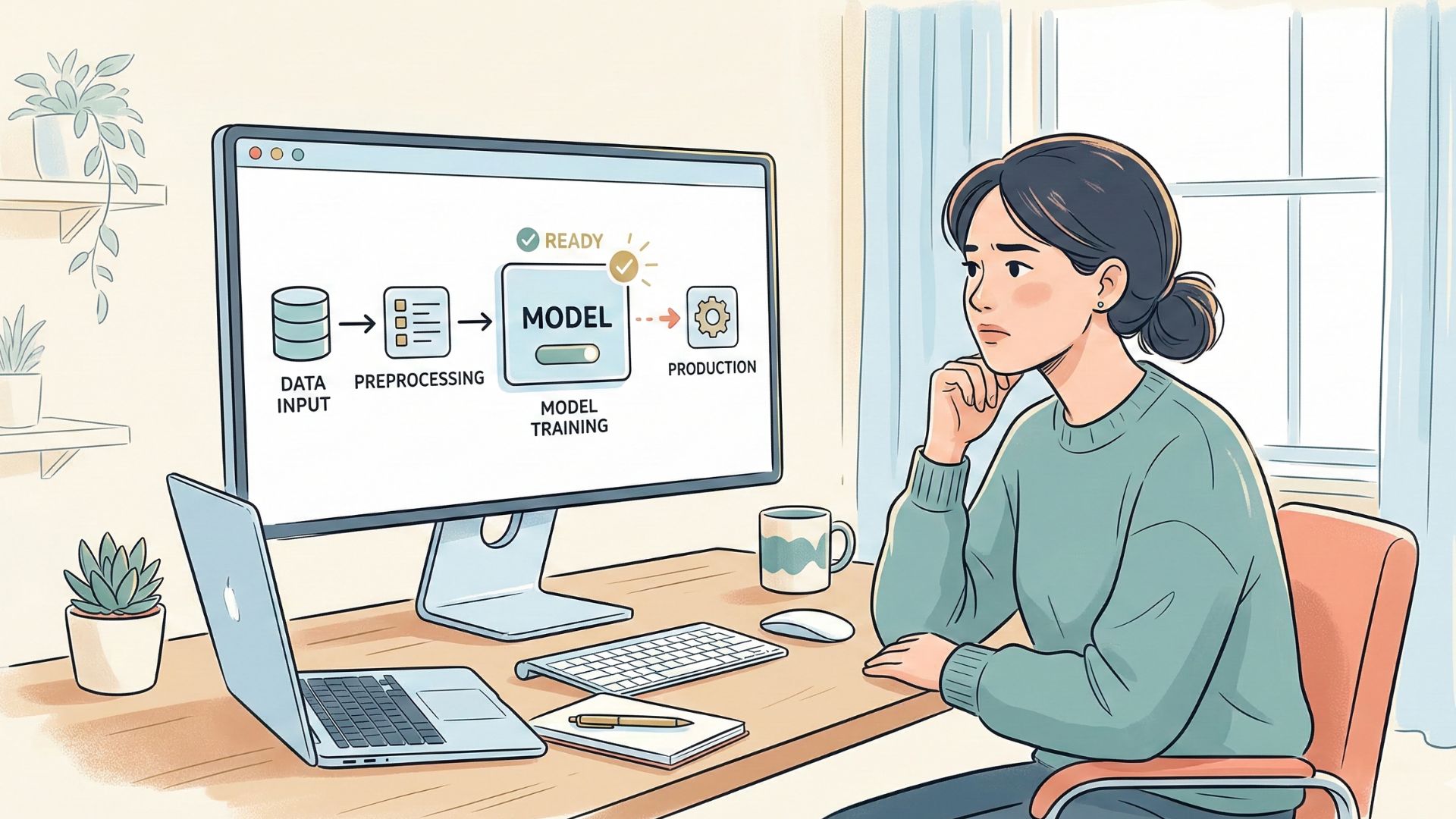

Model governance and regulatory overhead

Any system that moves from observing customer behaviour to acting on it, whether through next-best-action models, propensity scoring, or real-time decisioning, immediately enters the territory of model risk management, explainability requirements, and data consent obligations under frameworks like GDPR. These constraints are not optional, and solutions that ignore them create compliance exposure that quickly outweighs the commercial benefit.

{{cta-2}}

Why the standard responses have not worked

The financial services industry has spent heavily on this problem. CRM platforms, customer data platforms, real-time analytics infrastructure, data lakes, and event streaming architectures have all been deployed with genuine ambition.

And yet the gap persists. Why?

Because the technical investment has outpaced the organisational and architectural alignment needed to make it work. A real-time event stream is only useful if the identity layer is clean enough to associate events with the right customer. A next-best-action model is only trustworthy if the feature inputs are governed, documented, and explainable to a regulator. A unified customer view only stays unified if the data contracts between systems are maintained and monitored.

The failure mode is usually not the technology. It is the assumption that the technology alone closes the gap. What banks actually need is a coherent data architecture where transaction systems, behavioural signals, and customer context are connected by design, not by periodic reconciliation.

The CDOs making progress are not buying another platform. They are redesigning how customer context flows through the organisation - in real time, with governance built in from the start.

What closing the gap actually requires

Based on what we see across institutions of varying scale and complexity, the CDOs making meaningful progress share a few common approaches.

- A persistent, canonical customer identity.

Before any contextual intelligence is possible, identity resolution has to be solved at the infrastructure level. This means a stable identifier that survives system migrations, channel additions, and product changes - and that propagates in real time, not in overnight batch. - Event streaming with semantic enrichment.

Raw event logs are not the same as behavioural intelligence.The architecture needs a layer that interprets events in context. Not just that a customer visited the mortgage section of the app, but that this visit followed a salary increase and preceded a Google search for fixed-rate products. That interpretive layer requires both technical infrastructure and clear data product ownership. - Latency that matches the opportunity window.

Not every customer signal requires sub-second response. But institutions that rely entirely on batch processing will consistently miss the moments that matter most. Defining which signals require real-time action and building the streaming infrastructure to support those specifically is more pragmatic than trying to make everything real-time at once. - Governance and explainability as first-classrequirements.

Any decisioning system that touches customer data in aregulated environment needs to be able to answer:

- What data was used

- What model produced this output

- How would you explain this decision to acustomer or regulator

- A shared customer surface across teams.

The organisational counterpart to unified data is a shared interface where relationship managers, product teams, and operations see the same customer context. Not the same dashboard, necessarily, but the same underlying truth - enriched for each team’s specific purpose.

{{cta-3}}

Parkar point of view

The institutions that move first will set the standard

The underlying dynamic in financial services data is shifting in a way that makes this more urgent than it might appear. As open banking matures and data portability increases, the competitive advantage of having more transaction history erodes. What does not erode is the ability to interpret that history faster, more accurately, and in a way that drives abetter customer conversation.

At Parkar, we work with financial institutions that have reached the limits of what more data infrastructure alone can deliver. The challenge is no longer instrumentation; it is integration. It is connecting what banks know about money to what they understand about the people behind it, in a way that is fast enough to act on, governed well enough to trust, and coherent enough to share across teams.

This is not a single-sprint problem, and it is not solved by a single platform. It requires deliberate architectural thinking, clear data product ownership, and the organisational will to treat customer understanding with the same rigor that has always been applied to transaction systems.

The next phase of competitive advantage in banking belongs to institutions that can see customers with the same clarity they see money - without compromising the trust that makes that data available to them in the first place.

If your teams can reconstruct any transaction in your history but pause when asked which customer actions are driving growth right now - and why - the gap is already costing you today.

We’d welcome a conversation about where the gap shows up in your institution and what closing it would make possible.